Machine Learning API: Build Intelligent Apps Fast

Machine learning APIs have revolutionized how developers integrate artificial intelligence into applications. Instead of building ML models from scratch—requiring expertise in data science, extensive computing resources, and months of development time—you can now embed sophisticated AI capabilities into your software with a few lines of code. Whether you need image recognition, natural language processing, speech synthesis, or predictive analytics, ML APIs provide ready-made solutions that scale with your demands.

This guide explores everything you need to know about machine learning APIs: how they work, leading providers in the market, key selection criteria, implementation best practices, and real-world use cases that demonstrate their transformative potential.

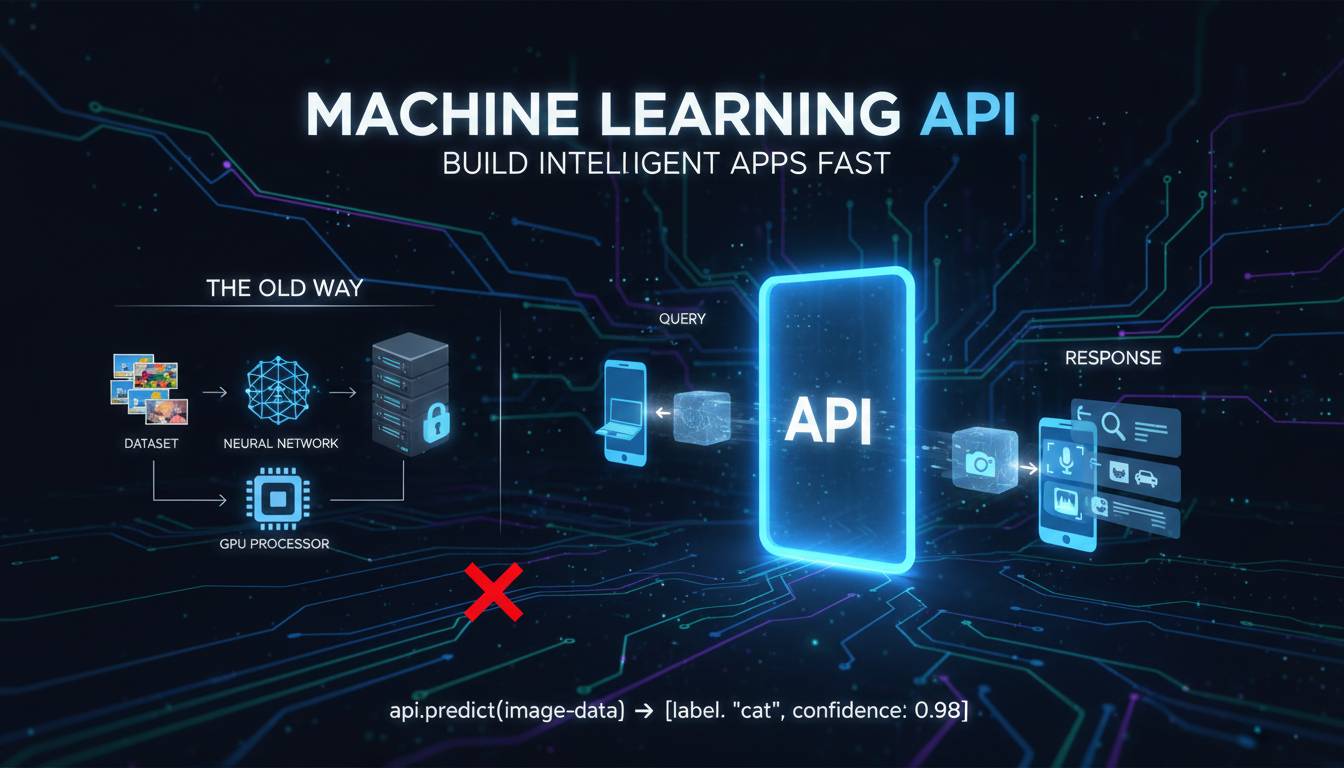

What Is a Machine Learning API?

A machine learning API (Application Programming Interface) is a cloud-based service that exposes pre-trained ML models through standardized HTTP requests. Rather than training, deploying, and maintaining your own models, you send data to the API and receive predictions or insights in return.

The workflow typically follows this pattern: your application sends input data (an image, text snippet, audio file, or structured data) to the API endpoint via a REST or GraphQL call. The service processes this input using its trained model, applies the appropriate algorithms, and returns structured results—often in JSON format—that your application can parse and use.

Key characteristics of ML APIs:

- Pre-trained models: Providers invest heavily in training sophisticated models on massive datasets, then make them available as services

- Managed infrastructure: The provider handles scaling, uptime, model updates, and hardware optimization

- Pay-per-use pricing: Most services charge based on the number of requests or compute time, allowing cost-effective experimentation

- Continuous improvement: Leading providers regularly update models with better accuracy and new features

Major cloud platforms—including Amazon Web Services, Google Cloud, Microsoft Azure, and specialized providers like OpenAI and Cohere—offer hundreds of ML APIs covering diverse capabilities.

Leading Machine Learning API Providers

The ML API market has matured significantly, with several major players offering comprehensive suites of intelligent services. Understanding their strengths helps you select the right provider for your specific needs.

Amazon Web Services (AWS)

AWS provides one of the broadest ML API offerings through its Amazon SageMaker family and specialized services. Rekognition handles image and video analysis, including object detection, facial recognition, and content moderation. Comprehend delivers natural language processing capabilities like entity extraction, sentiment analysis, and language detection. Polly converts text to lifelike speech using neural text-to-speech technology, while Lex enables building conversational interfaces.

AWS pricing varies by service but typically follows pay-per-request models with free tiers for initial experimentation.

Google Cloud Platform

Google’s ML APIs leverage the same technology powering Google’s own products. Vision AI provides image labeling, face detection, and optical character recognition. Natural Language API offers sentiment analysis, entity recognition, and content classification. Speech-to-Text and Text-to-Speech handle audio conversions, while Translation API supports over 100 languages.

Google Cloud distinguishes itself through deep learning research integration and often leads benchmarks for model accuracy.

Microsoft Azure

Azure’s Cognitive Services portfolio includes Vision, Speech, Language, and Decision APIs. Azure OpenAI Service provides access to GPT-4 and other large language models, making it particularly attractive for generative AI applications. Azure Computer Vision and Azure Form Recognizer excel at document processing and extraction tasks.

Azure integrates tightly with Microsoft ecosystem tools, benefiting organizations already using Microsoft 365 and Dynamics.

Specialized Providers

Beyond cloud giants, specialized providers focus on specific capabilities:

- OpenAI: Leading provider of large language models (GPT-4, GPT-4o) for text generation, coding assistance, and conversational AI

- Cohere: Enterprise-focused LLMs with emphasis on data privacy and customization

- Anthropic: Claude family of AI assistants designed for helpful, harmless, and honest interactions

- Hugging Face: Community-driven platform with thousands of community-contributed models alongside paid endpoints

How to Choose the Right ML API

Selecting an ML API requires evaluating multiple factors beyond just capability matching. Consider these dimensions when making your decision.

Accuracy and Performance

Model accuracy varies significantly between providers and even between versions of the same service. For critical applications, test multiple providers with your specific data types. Look for published benchmark results, but prioritize testing with representative samples from your use case.

Pricing Structure

ML API pricing typically follows several models:

| Pricing Model | Description | Best For |

|---|---|---|

| Per-request | Fixed cost per API call | Predictable workloads |

| Compute-based | Charges for processing time | Variable complexity tasks |

| Tiered subscriptions | Monthly fees for guaranteed capacity | High-volume applications |

| Free tiers | Limited free requests for testing | Evaluation and development |

Calculate expected costs by estimating request volume and multiplying by per-request fees. Watch for additional charges like data transfer costs or storage fees.

Latency Requirements

Response time matters for real-time applications. Cloud-based APIs typically deliver responses within 100-500ms for simple tasks, though complex operations may take several seconds. If your application requires sub-100ms responses, consider edge deployment options or on-premise solutions.

Data Privacy and Compliance

ML APIs often process sensitive data, so compliance matters. Key considerations include:

- Data handling policies: Does the provider use your data to improve its models?

- Encryption: Is data encrypted in transit and at rest?

- Compliance certifications: Look for SOC 2, HIPAA, GDPR, or industry-specific certifications

- Data residency: Can you specify where your data is processed?

Enterprise-focused providers like Microsoft Azure and Cohere offer enhanced privacy controls and data processing agreements.

Integration and Developer Experience

Evaluate SDK availability, documentation quality, code examples, and community support. The easiest API to integrate saves significant development time. Most major providers offer SDKs for Python, JavaScript, Java, and other popular languages.

Implementation Best Practices

Successfully integrating ML APIs requires more than just making API calls. Follow these best practices for robust, maintainable implementations.

Error Handling and Resilience

ML APIs can fail due to network issues, rate limiting, or service outages. Implement comprehensive error handling:

import time

import requests

from typing import Optional

def call_ml_api(image_data: bytes, max_retries: int = 3) -> Optional[dict]:

for attempt in range(max_retries):

try:

response = requests.post(

api_endpoint,

files={"image": image_data},

headers={"Authorization": f"Bearer {api_key}"},

timeout=30

)

response.raise_for_status()

return response.json()

except requests.exceptions.Timeout:

if attempt < max_retries - 1:

time.sleep(2 ** attempt) # Exponential backoff

continue

except requests.exceptions.RequestException as e:

# Log error, notify monitoring

raise

return None

Implement circuit breakers to prevent cascade failures when services experience issues.

Input Validation and Sanitization

Validate all input data before sending to APIs. Check file types, size limits, and content format. Sanitize text inputs to prevent prompt injection attacks when working with LLMs.

Caching Strategies

Many ML predictions are deterministic—相同 input produces identical output. Implement caching to reduce API costs and improve response times:

- Exact match caching: Store results keyed by input hash for identical requests

- Approximate caching: For fuzzy matching use cases, cache results for similar inputs

- Time-based eviction: Refresh cached predictions periodically to capture model updates

Rate Limiting Compliance

Respect API rate limits to avoid service disruption. Implement request throttling on your side, queue requests during high-traffic periods, and monitor usage to stay within quotas.

Real-World Use Cases

ML APIs power applications across industries. Here are representative examples demonstrating practical implementation.

E-Commerce Product Search

A mid-size retailer implemented visual search using Google Cloud Vision API. Customers can upload photos of products they want, and the API identifies similar items in the retailer’s catalog. Implementation required three weeks of development work, including image preprocessing and catalog indexing. Results showed a 23% increase in search-to-purchase conversion rates.

Technical details: Images are resized and converted before API calls. Results are ranked by similarity scores and filtered by inventory availability. The system handles approximately 50,000 visual searches monthly.

Customer Support Automation

A SaaS company deployed OpenAI’s GPT-4 API to power an intelligent support chatbot. The system routes common questions to an AI assistant while escalating complex issues to human agents. Implementation included fine-tuning on company documentation and support history.

Results after six months: Automated resolution of 67% of incoming tickets, 40% reduction in average response time, and customer satisfaction scores maintained at 94%.

Content Moderation

A social media platform implemented AWS Rekognition and Amazon Comprehend for automated content moderation. The system scans uploaded images and text for policy violations, flagging content for human review.

Performance metrics: 99.2% accuracy on image moderation, 94% on text analysis, with human reviewers handling approximately 3,000 flagged items daily.

Common Challenges and Solutions

Despite their accessibility, ML API implementations face recurring challenges.

Handling API Downtime

ML APIs are cloud services subject to outages. Build redundancy by:

- Maintaining accounts with multiple providers for critical capabilities

- Implementing graceful degradation (fallback rules or default responses)

- Using queue systems to buffer requests during brief outages

- Establishing on-call alerting for extended service disruptions

Managing Costs at Scale

API costs can escalate quickly at scale. Control spending through:

- Implementing request budgets and alerts

- Caching aggressively where appropriate

- Using batch processing for non-time-sensitive tasks

- Selecting dedicated instances or enterprise plans for high-volume workloads

- Regularly auditing usage patterns

Dealing with Inaccurate Predictions

No ML model is perfect. When API results don’t meet accuracy requirements:

- Implement confidence thresholds and route uncertain predictions for human review

- Combine multiple APIs for cross-validation

- Provide feedback to providers (many accept accuracy reports)

- Consider fine-tuning or custom model training for domain-specific improvements

Future Trends in ML APIs

The ML API landscape continues evolving rapidly. Key trends shaping the future include:

Multimodal capabilities: APIs that process and generate multiple data types—text, images, audio, video—within single requests are becoming mainstream. OpenAI’s GPT-4V and Google’s Gemini exemplify this trend.

Smaller, faster models: Compression techniques like quantization enable powerful models that run faster and cheaper. Expect more on-device and edge deployment options.

Specialized industry models: Pre-trained models specifically fine-tuned for healthcare, legal, finance, and other verticals will reduce implementation complexity for domain-specific applications.

Lower barriers to customization: Transfer learning and fine-tuning capabilities are becoming more accessible, allowing organizations to adapt general APIs to their specific needs without massive data requirements.

Frequently Asked Questions

What programming languages support ML APIs?

Most ML API providers offer SDKs for Python, JavaScript/TypeScript, Java, C#, Go, and Ruby. Python has the broadest support and is recommended for ML-focused projects. REST API access is universal, so any language with HTTP capabilities can integrate.

How much do ML APIs cost?

Pricing ranges from free tiers (typically 1,000-5,000 requests per month) to enterprise contracts costing hundreds of thousands annually. Per-request prices range from $0.001 for simple tasks to $15+ for complex LLM interactions. Most providers offer calculators to estimate costs based on expected usage.

Can I use ML APIs offline?

Most ML APIs require internet connectivity to send data to cloud servers. However, some providers offer on-premise solutions (like AWS SageMaker Edge Manager) or edge SDKs that run lightweight models locally. These options suit applications requiring offline operation or minimal latency.

How accurate are ML API predictions?

Accuracy varies by task, provider, and input quality. Image recognition APIs commonly achieve 95%+ accuracy on standard benchmarks. NLP tasks like sentiment analysis typically range 85-95% accuracy. Complex tasks like document understanding vary more widely. Always test with your specific data to determine actual performance.

Do ML APIs keep my data?

Data policies differ significantly between providers. Major cloud providers generally don’t use customer data to train public models, but may access it for service improvement. Enterprise agreements often include data isolation guarantees. Always review privacy policies and consider data sensitivity before implementation.

Conclusion

Machine learning APIs have democratized artificial intelligence, enabling developers without ML expertise to integrate sophisticated intelligence into applications. The key to successful implementation lies in selecting the right provider for your specific needs—considering accuracy, cost, latency, privacy, and developer experience.

Start with free tiers to evaluate capabilities before committing. Implement robust error handling and caching from the beginning. Monitor costs closely as usage scales. And stay informed about the rapid developments in this space, as new capabilities and providers emerge continuously.

The most successful implementations treat ML APIs as components within larger systems, not magic solutions. Combine them with proper architecture, human oversight where needed, and continuous evaluation of performance against your specific requirements.