What Is Artificial Intelligence? A Clear Guide for Beginners

Artificial intelligence has become one of the most transformative technologies of our time, yet many people still wonder: what exactly is AI, and how does it work? Whether you’ve encountered it through ChatGPT, self-driving cars, or Netflix recommendations, understanding artificial intelligence is increasingly essential in today’s world. This guide breaks down the fundamentals of AI in plain language, exploring its history, types, applications, and what the future holds—all without requiring a technical background.

Understanding Artificial Intelligence

At its core, artificial intelligence refers to computer systems designed to perform tasks that typically require human intelligence. These tasks include recognizing speech, making decisions, solving problems, translating languages, and identifying patterns in data. The term was first coined in 1956 during the Dartmouth Summer Research Project on Artificial Intelligence, a seminal conference that brought together researchers who believed machines could be programmed to simulate human thought processes.

Modern AI encompasses a broad range of techniques and approaches, but what distinguishes it from traditional software is its ability to learn from data and improve over time without explicit programming for every scenario. Instead of following rigid, pre-defined rules, AI systems identify patterns in information and use those patterns to make predictions or decisions. This capability—often called machine learning—allows AI to handle situations its creators never specifically programmed it to address.

The field has evolved dramatically since its inception, progressing from simple rule-based systems to sophisticated neural networks capable of generating human-like text, creating original images, and even defeating world champions in complex games like chess and Go. Today, AI permeates countless aspects of daily life, often operating invisibly behind the scenes in applications people use every day.

A Brief History of AI

The journey toward modern artificial intelligence began centuries earlier than most people realize. Philosophers and mathematicians long speculated about the possibility of thinking machines, but the formal field emerged in the mid-20th century. In 1950, Alan Turing published his influential paper “Computing Machinery and Intelligence,” which proposed the famous Turing Test as a measure of machine intelligence—a benchmark that remains relevant in AI discussions today.

The 1956 Dartmouth conference marked the birth of AI as an academic discipline. Early optimism led to significant breakthroughs, including the first AI programs that could play chess and solve algebraic word problems. However, the limitations of early computing power and the complexity of human cognition soon became apparent. Funding cuts during the “AI winter” of the 1970s and 1980s slowed progress considerably.

The modern AI revolution began around 2012, triggered by the emergence of deep learning—a technique that uses artificial neural networks with many layers to recognize complex patterns. Breakthroughs in image recognition, speech processing, and natural language understanding followed rapidly. In 2022, the public release of large language models like ChatGPT brought generative AI into mainstream consciousness, demonstrating capabilities that seemed like science fiction mere years earlier.

Types of Artificial Intelligence

Understanding AI requires recognizing that not all AI systems are created equal. Researchers typically categorize artificial intelligence into three broad types based on capability.

Narrow AI (or weak AI) describes systems designed to perform specific tasks within limited domains. Every AI application existing today falls into this category. Your phone’s voice assistant, recommendation algorithms on streaming services, and email spam filters all represent narrow AI—they excel at particular jobs but cannot generalize beyond their training. These systems can sometimes outperform humans in their specific domains but lack the broad reasoning capabilities of human intelligence.

General AI (or strong AI) refers to machines with the capacity to understand, learn, and apply knowledge across diverse tasks—essentially matching human cognitive abilities. This type of AI remains theoretical; despite remarkable progress, no system has achieved true general intelligence. Researchers at organizations like DeepMind and OpenAI continue working toward this goal, though estimates for its realization range from a decade to several decades away.

Superintelligent AI represents a hypothetical future system that would surpass human intelligence across virtually every domain. This concept, popularized by philosopher Nick Bostrom, raises profound questions about humanity’s future. While some experts like Geoffrey Hinton (often called the “godfather of deep learning”) have expressed cautious optimism about AI’s potential benefits, others emphasize the need for careful governance to ensure advanced AI systems remain aligned with human values.

How AI Works: Machine Learning Explained

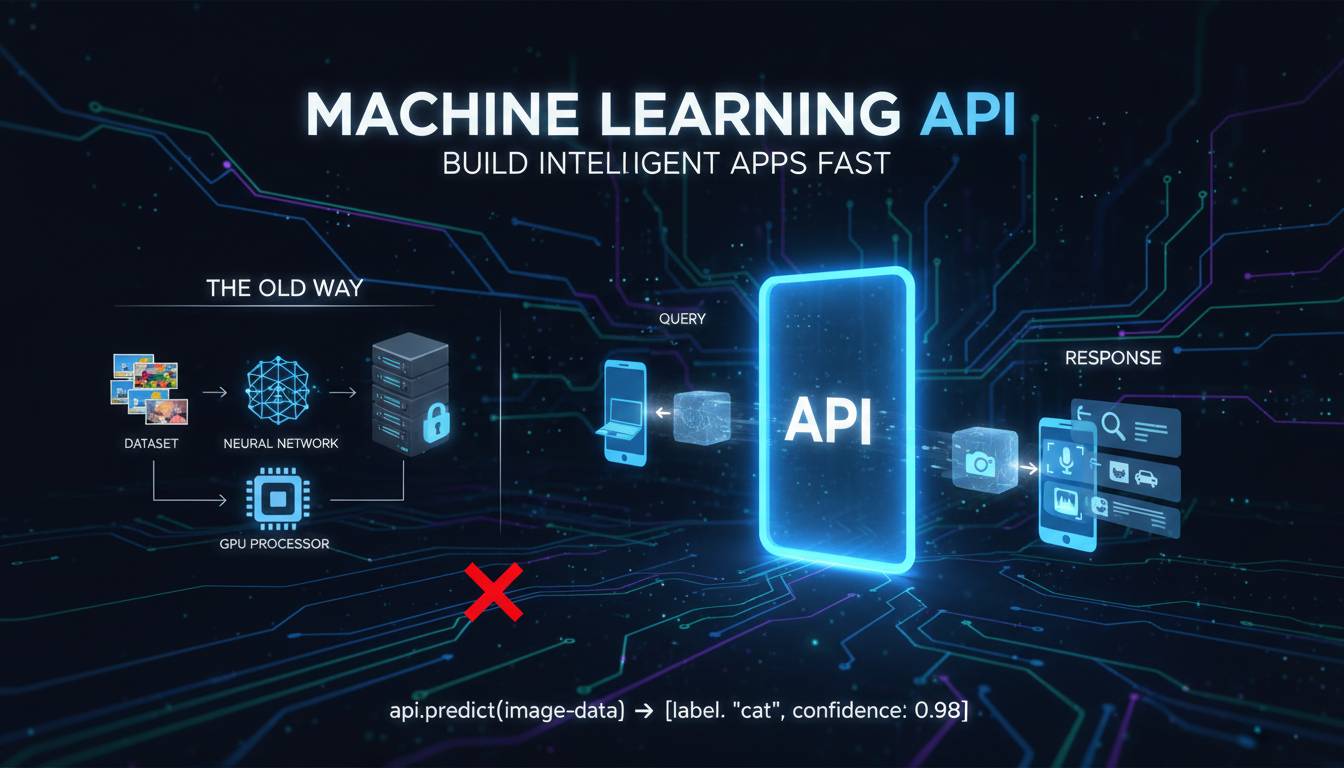

Understanding how AI works begins with machine learning—the technique that enables systems to learn from data rather than following explicit programming instructions. Traditional software receives detailed instructions (algorithms) that tell it exactly what to do. Machine learning takes a different approach: developers create algorithms that can identify patterns in data, and the system improves its performance as it processes more information.

The most powerful modern AI systems rely on neural networks, computing structures inspired by the biological neural networks in animal brains. These networks consist of interconnected nodes (neurons) arranged in layers. When data enters the network, it passes through multiple layers, each layer transforming the information slightly. Early layers might detect simple features like edges in an image, while deeper layers recognize complex combinations—eventually identifying faces, objects, or scenes.

Training these networks requires enormous amounts of data and substantial computational power. During training, the system adjusts the connections between neurons to minimize errors in its predictions. This process—often called “learning”—can require thousands or millions of iterations. A system trained to recognize cats, for example, examines millions of cat images, gradually adjusting its internal parameters until it can accurately identify cats in new images it has never seen before.

Deep learning extends this concept by using networks with many layers (hence “deep”). This approach has driven most recent AI breakthroughs, from speech recognition to language translation to generative AI systems that create original content.

Key AI Techniques and Concepts

Several fundamental techniques underpin modern AI systems, each suited to different types of problems.

Natural Language Processing (NLP) enables machines to understand, interpret, and generate human language. Applications range from translation services and chatbots to sentiment analysis and content summarization. Modern large language models (LLMs) like GPT-4 represent a significant advancement in NLP, demonstrating remarkable fluency in generating text that closely mimics human writing.

Computer Vision allows AI systems to interpret and analyze visual information from the world. Beyond simple image recognition, computer vision enables facial recognition, medical image diagnosis, autonomous vehicle navigation, and quality control in manufacturing. Systems can now identify objects in photographs with accuracy matching or exceeding human performance.

Reinforcement Learning trains AI systems through trial and error, rewarding desired behaviors and penalizing undesired ones. This technique has produced remarkable results in game-playing AI, from chess to Go to complex video games. AlphaGo’s 2016 victory over world champion Lee Sedol demonstrated reinforcement learning’s potential to discover strategies that human players had never considered.

Generative AI refers to systems that create new content—text, images, audio, or video—based on patterns learned from training data. Tools like DALL-E (image generation), Midjourney, and ChatGPT (text generation) have brought generative AI into mainstream use. These systems don’t truly “create” in the human sense but rather recombine patterns from their training data in novel ways.

Real-World Applications of AI

AI technology now touches virtually every industry, transforming how businesses operate and how people live their daily lives.

In healthcare, AI assists diagnosis by analyzing medical images to detect conditions like cancer, diabetic retinopathy, and heart abnormalities. Pharmaceutical companies use AI to accelerate drug discovery, identifying promising compounds that might take human researchers years to find. During the COVID-19 pandemic, AI systems helped track virus spread and identify potential treatments.

Finance relies heavily on AI for fraud detection, analyzing transaction patterns to identify suspicious activity in real time. Algorithmic trading uses AI to make rapid investment decisions based on market conditions. Banks employ AI for credit scoring, assessing borrower risk more accurately than traditional methods.

Transportation is being revolutionized by AI. Autonomous vehicles use computer vision and sensor fusion to navigate roads, while AI optimizes logistics and delivery routes. Ride-sharing services employ AI to match drivers with passengers and calculate optimal pricing.

Entertainment and media companies use AI to personalize content recommendations, as seen with Netflix, Spotify, and YouTube. News organizations use AI to generate routine financial and sports reports, freeing journalists for investigative work. Movie studios increasingly use AI in script analysis and marketing.

AI in Everyday Life

Beyond specialized applications, AI has become deeply embedded in consumer technology people use constantly. Smart assistants like Siri, Alexa, and Google Assistant use speech recognition and natural language processing to respond to voice commands. Email spam filters learn to identify unwanted messages. Navigation apps like Google Maps and Waze use AI to analyze traffic patterns and recommend optimal routes in real time.

Social media platforms employ AI for content curation, deciding which posts users see in their feeds. Online shopping recommendations, from Amazon’s “frequently bought together” to personalized homepage layouts, rely on AI analyzing purchase and browsing history. Even smartphone cameras use AI to enhance images automatically, detecting scenes and adjusting settings for optimal results.

This ubiquity means interacting with AI has become routine for billions of people, even those unaware they’re using it. Understanding these interactions helps demystify AI and prepares people to engage more thoughtfully with increasingly intelligent systems.

The Future of Artificial Intelligence

Predicting AI’s future trajectory involves significant uncertainty, but certain trends appear likely. AI capabilities will continue expanding, with systems handling increasingly complex tasks with less human oversight. Multimodal AI—systems that process text, images, audio, and video simultaneously—represents a major frontier, enabling more natural human-computer interaction.

The economic impact promises to be substantial. McKinsey’s research estimates that AI could contribute approximately $13 trillion to the global economy by 2030, transforming industries from manufacturing to professional services. However, this transformation raises important questions about workforce displacement, requiring societies to consider retraining programs and new economic models.

AI governance has become a critical concern. Governments worldwide are developing regulations to address issues like algorithmic bias, privacy, and safety. The European Union’s AI Act represents one comprehensive approach, categorizing AI systems by risk level and imposing corresponding requirements. Meanwhile, organizations like the Partnership on AI bring together companies to establish best practices.

Experts remain divided on timeline and outcome. Some, like Futurologist Ray Kurzweil, predict human-level AI by 2029, while others counsel patience. What nearly all agree on is that AI will profoundly shape the coming decades, making understanding its foundations increasingly valuable.

Conclusion

Artificial intelligence has evolved from an academic concept to a technology reshaping every aspect of modern life. While the field encompasses many techniques and approaches, its core promise remains constant: creating systems that can learn, adapt, and perform tasks requiring human intelligence. Whether AI ultimately fulfills hopes for solving humanity’s greatest challenges or introduces unprecedented risks depends on choices made today—by researchers, companies, governments, and citizens.

For beginners, the key takeaway is that AI isn’t magic or science fiction—it’s a rapidly advancing technology built on fundamental principles of computer science and mathematics. Understanding these basics prepares you to engage more meaningfully with AI’s role in society, whether as a user, professional, or informed citizen. As AI continues its expansion into daily life, this foundational knowledge will only grow more valuable.

Frequently Asked Questions

Q: What is the simplest definition of artificial intelligence?

Artificial intelligence is computer systems designed to perform tasks that typically require human intelligence, such as recognizing patterns, making decisions, solving problems, and understanding language. The key distinction from traditional software is that AI systems can learn from data and improve their performance without being explicitly programmed for every possible scenario.

Q: How is machine learning different from artificial intelligence?

Machine learning is a subset of artificial intelligence—a technique that enables AI systems to learn from data rather than following fixed rules. While AI is the broader concept of machines performing intelligent tasks, machine learning specifically refers to the method by which AI systems improve through experience. Not all AI uses machine learning, but most modern AI applications do.

Q: Can AI really think and understand like humans?

Current AI systems, including large language models, do not possess genuine understanding or consciousness in the human sense. They process text and generate responses based on statistical patterns in their training data, without genuine comprehension or feelings. Whether AI could ever achieve true understanding remains a deep philosophical question that experts continue debating.

Q: Is AI dangerous?

AI poses risks that require careful management, including potential job displacement, algorithmic bias perpetuating societal inequalities, privacy concerns from surveillance capabilities, and the long-term challenge of ensuring advanced AI systems remain aligned with human values. However, many AI researchers and developers actively work on safety measures and governance frameworks to address these concerns.

Q: How is AI used in everyday products?

Most people interact with AI daily through smartphone assistants (Siri, Alexa), streaming service recommendations (Netflix, Spotify), email spam filters, navigation apps with real-time traffic analysis, social media feeds, and online shopping recommendations. Even camera apps increasingly use AI for scene detection and image enhancement.

Q: Will AI replace human jobs?

AI will likely automate many tasks currently performed by humans, particularly routine and repetitive work. However, history suggests technology creates new categories of employment even as it displaces existing jobs. The more pressing concern is ensuring workers have opportunities to transition to new roles through education and training programs.